Flattening the residual mountain.

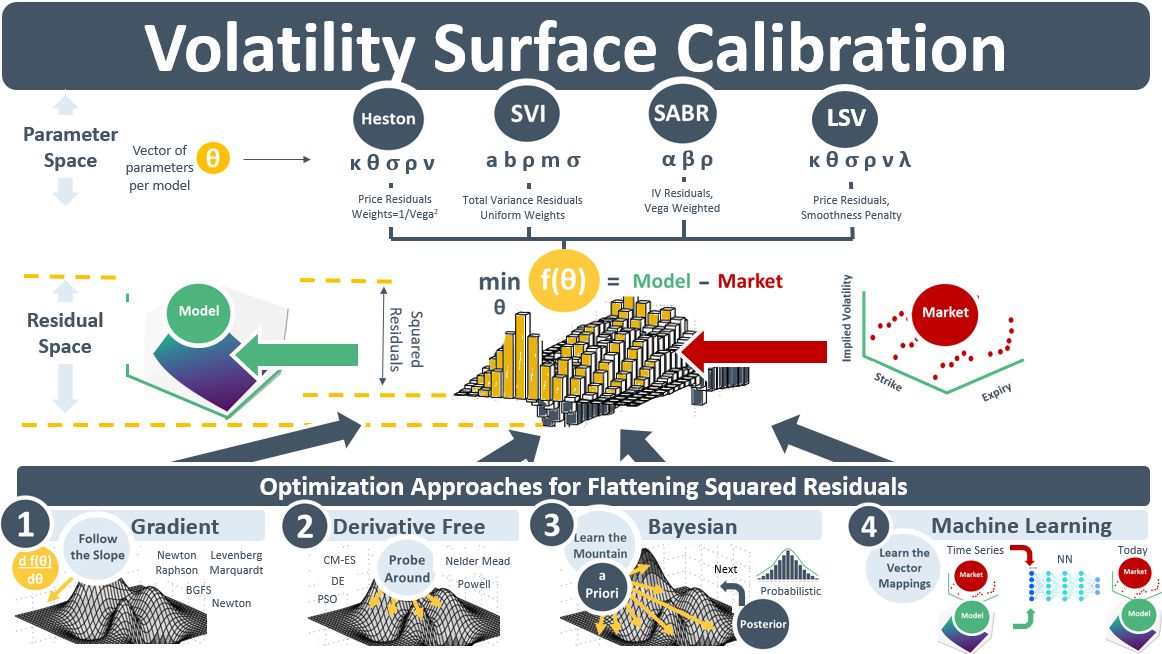

The more volatile the price underlying an option, the better its chance of finishing ITM, and therefore the more valuable the option. The volatility embedded in an option’s price is referred to as implied volatility. Option prices are quoted for different maturities and strike prices. When the option price or its IV is plotted on a 3D surface of dimension [strike, maturity, IV] it looks like the red “market” surface on the RHS of the diagram below. For non-traded OTC options, this surface acts as an input to models that are used to value the OTC. A key step in option pricing models is the conversion of this red market surface into the green continuous and smooth surface on the LHS. Quantitative models such as Heston, SVI, SABR and LSV perform this task. They contain parameters which, when assigned the correct numeric values, generate a model surface shape that closely resembles the plot of the market surface.

The process of assigning numeric values to parameters to generate a model surface that matches the shape of the market surface is called calibration. A residual is the diff btw the model-calculated price and a market price for a point on the surface. The residuals are squared so +ve’s don’t net off -ve’s. When plotted in 3D, the squared residuals form a series of mountainous-looking peaks and valleys. The objective of a calibration is to flatten the whole mountain. It does this by finding the global minimum of a single aggregated weighted squared residual objective function.

The parameters of the model move around in parameter space testing different parameter-value combinations to help find the global min. There are four categories of this optimization. 1) Gradient. After an initial guess, gradient approaches use mathematical derivatives wrt to the model’s parameters to instantly tell you which direction is the steepest downhill. You follow that path until you find the bottom of the hill – the local minimum. 2) Derivative-Free Optimization approaches probe around until they find a point nearby that results in a slight overall flattening of the mountain. Then repeat. While DFO approaches are slower than gradient approaches, they can be extended to search across valleys to find the global min, 3) Bayesian Optimization builds a probability map of the mountain, its peaks and valleys, before walking to the points that are likely to give the most information. It stores this info, then repeats until it converges on the global min. It is less expensive computationally than global DFO, 4) ML methods ignore the mountain. Instead, they learn the weights of nodes in neural networks that define a mapping of the data elements of the red market surface to the green model surface.

Levenberg-Marquardt is a common gradient method for finding the local minima of a residual mountain valley. It is often combined with ML or BO methods to ensure it is looking in the right valley.